Inside the vendor risk score

A vendor risk score shouldn’t be a black box. Here’s how a well-designed model actually works: from inherent risk to residual risk, and every assurance layer in between.

Why Is Third-Party Risk So Hard to Measure?

Plenty of TPRM programs collect certifications. Plenty collect questionnaires. But collecting documents isn’t the same thing as measuring risk. Having a real methodology, one that lets you compare wildly different vendors in a systematized, defensible way, turns out to be surprisingly difficult.

Two problems are especially stubborn. First, a vendor’s security posture can collapse in an instant. Breaches happen, and a vendor that looked airtight last quarter can be compromised tomorrow. Second, documents don’t necessarily reflect reality. A questionnaire answer saying “yes, we encrypt data at rest” and a SOC 2 report proving that same thing carry very different weight, and a rigorous risk model has to treat them differently.

The Foundation: Risk = Impact × Likelihood

Every credible risk model starts here. But collapsing both dimensions into a single number, without exposing them separately, discards nuance that practitioners genuinely need. We keep impact and likelihood distinct throughout the entire calculation, so you always understand why a score landed where it did.

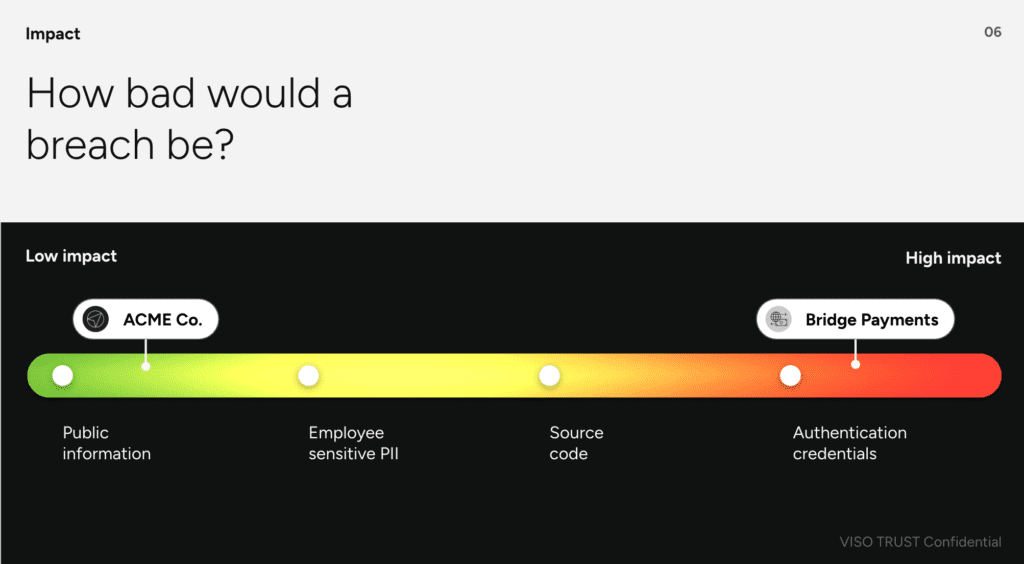

Impact: What data are you sharing?

Impact is driven entirely by the data classification of what you’re handing to a vendor. Acme Co. handles anonymized marketing data with low sensitivity.

Bridge Payments touches authentication credentials and payment infrastructure, extremely sensitive. In the VISO TRUST platform, impact is a configurable number that maps to your organization’s own data classification scheme, so what counts as “high impact” can differ meaningfully from company to company depending on your regulatory environment.

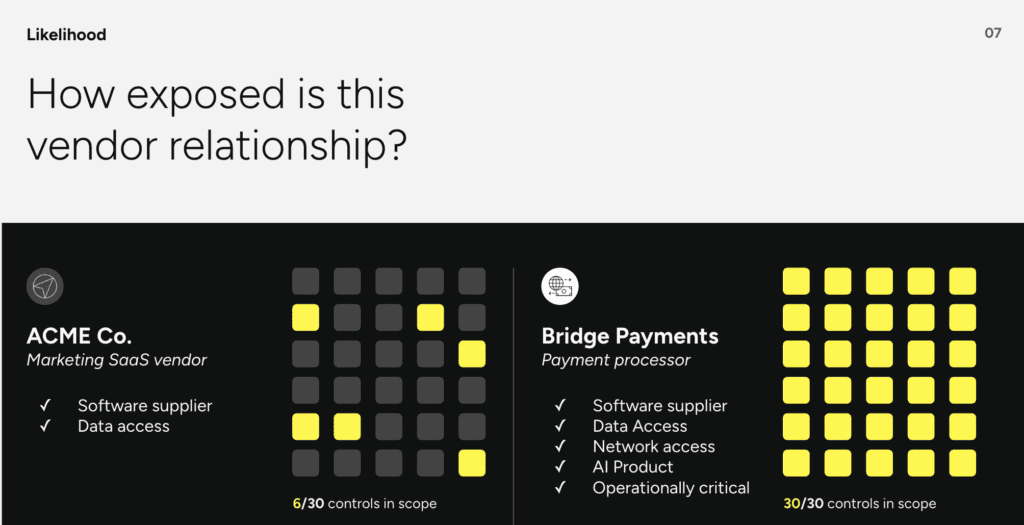

Likelihood: How exposed is this relationship?

Likelihood reflects the attack surface created by how you work with a vendor. At intake, you answer questions about the nature of the relationship: Is this a software supplier? Does the vendor have direct data access? Network access? Is it an AI product? Is it operationally critical?

Each answer maps to a set of controls in the framework. For Acme Co., the relationship context triggers 6 out of 30 controls. For Bridge Payments, all 30 controls are in scope. That difference in exposure, before a single document is reviewed, drives a dramatically different inherent risk rating.

“Collecting documents isn’t the same thing as measuring risk.” Gillian, Head of Product, VISO TRUST

Inherent Risk: Before Any Evidence

Inherent risk is what you get when you multiply impact by the full, unmitigated likelihood. It answers the question: How risky would this relationship be if the vendor had zero security controls in place? No certifications, no questionnaires, just the raw nature of the relationship.

Acme Co., with low-sensitivity data and only 6 controls in scope, lands at a low inherent risk. Bridge Payments, with highly sensitive data and all 30 controls in scope, lands at extreme inherent risk. This is before we look at a single piece of evidence.

Residual Risk: After the Evidence

Residual risk is where the model gets interesting and where most platforms fall short. The formula is the same (Impact × Likelihood), but now likelihood is adjusted downward based on the mitigating controls the vendor can actually demonstrate.

Three factors govern how much any given control reduces the likelihood score:

- 01. Influence: Not all controls matter equally. Higher-influence controls carry more weight in the calculation.

- 02. Presence: Is there actual evidence that the control is in place? The platform weighs evidence against the stated requirements of each control.

- 03. Assurance: How credible is that evidence? The artifact type and the depth of verification both affect how much the score moves.

The Assurance Hierarchy

This is the detail that separates meaningful risk scores from checkbox exercises. Not all evidence is created equal, and the platform explicitly models that hierarchy:

- Vendor attestation: Self-reported questionnaire answers, lowest assurance, limited score reduction

- Third-party audit: SOC 2 or equivalent, higher assurance, but only for controls that appear in scope

- Tested controls: Auditor-verified test results, maximum assurance, greatest likelihood reduction

In practice, this means a vendor that answers a questionnaire perfectly will have a higher residual risk than a vendor that provides a SOC 2 covering the same controls, because the questionnaire evidence is inherently less credible. And if that same vendor later provides a SOC 2, the platform automatically updates the assurance score and further reduces the residual risk.

“What actually moves the risk score is often assurance.” Gillian, Head of Product, VISO TRUST

What This Looks Like in Practice

Returning to our two vendors, Acme Co. started at low inherent risk. With evidence found for 3 of its 6 in-scope controls, the residual risk burns down further, still low, but not as low as it could be if all 6 controls were demonstrated with high-assurance artifacts. Bridge Payments, starting at extreme inherent risk with 15 of 30 controls evidenced, lands at high residual risk. The risk matrix makes this visual: the maximum assurance a vendor can provide can only reduce the likelihood so far, given the underlying impact.

Some vendors, no matter how well-documented, cannot reach a “low” residual risk — and that’s intentional. The model is being honest about the nature of the relationship.

What Good Looks Like for a TPRM Program

From our perspective, a well-designed program does four things:

Consistent inputs. Scores are only comparable if the inputs are standardized. Apples-to-apples comparison across vendors requires a consistent framework applied the same way every time.

Controls weighted by influence. Not every control matters equally. The model should reflect that.

Real evidence, not just attestations. Questionnaire answers are a starting point, not a finish line. The model should reward higher-assurance artifacts.

Continuous monitoring. Risk posture can change overnight. A point-in-time assessment that sits in a drawer for 12 months isn’t a risk program; it’s a compliance exercise.

“The risk score is deterministic, and that’s by design. It’s configurable, entirely consistent, entirely predictable, results you can stand behind.”

Paul, Co-Founder, VISO TRUST

The Executive Conversation

When a vendor comes back as high or extreme risk, the first question from leadership is always some version of: “Why? Everyone else uses this vendor.” The second is: “What does this mean for our business?”

A high risk rating doesn’t automatically mean “don’t use this vendor.” It means the opportunity has to outweigh the risk and that’s a business decision, not a security decision. The security team’s job is to give leadership everything they need to make that call with full information: what controls are missing, where assurance fell short, what the impact would be given the data being shared, and what compensating controls might change the picture.

A deterministic, explainable risk model makes that conversation possible. A black-box score makes it nearly impossible.

The Hardest Thing to Change

When asked what security leaders should change first about their TPRM programs, the answer is pointed: accuracy at scale. Do you have visibility into your entire vendor population? Do you know your fourth-party landscape? And is the information you’re working with actually accurate, not just comprehensive?

For too long, the limitations of manual, questionnaire-based processes pushed teams toward a check-the-box mentality. The technology now exists to do something genuinely meaningful at scale. Third-party breaches aren’t preventable, but their frequency and their impact absolutely are.